Building a website takes plenty of discipline and curiosity for it to be noticed on an ever-expanding internet landscape—one of the fundamental steps to SEO success is a sitemap.

A sitemap protocol is a roadmap to your website. Useful sitemaps allow search engines to crawl your site more efficiently. Google first introduced the protocol in 2005, letting developers publish links from their sites.

Joint support from Yahoo and Microsoft came in 2006, and later that year, Ask.com and IBM gave their backing to the new protocol. One of the significant advancements to the protocol was auto-discovery through the robots.txt file.

What Is A Sitemap?

A search engine’s function is to scour the internet for new code and webpages. A second function is to index the content and place it in a discoverable hierarchy—the final piece to the puzzle; display content to relevant queries.

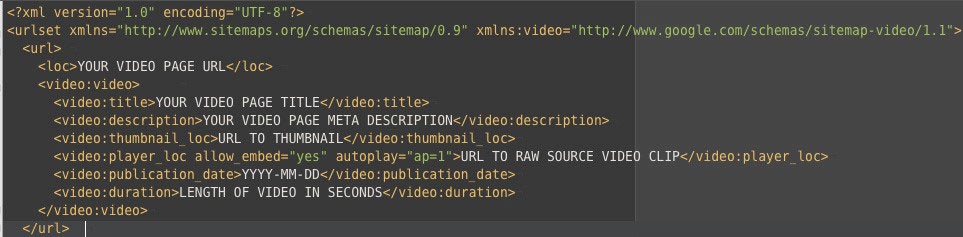

Sitemaps are the interface between a website and search engines. A google sitemap is an XML file extension giving webmasters the ability to tell crawler bots about new and changed URLs. Useful sitemaps tell the bots when the URL was last updated and its importance to other URLs in the site. This is what a sitemap can look like:

(Image Credit: DYNO Mapper)

Humans use sitemaps as an SEO marker for their websites. However, it should be fully understood: a sitemap index is for the search engine crawl bots. Anything webmasters can do to increase this communication, the better.

Search engines send out crawler bots or spiders periodically to find updated content. Bots are small pieces of code that interface with the engine and your website. The more efficient your sitemap, the easier it is for a search engine to discover and index new content and links.

Crawling is the process of bots visiting websites based on a search engine’s algorithm of frequency and other factors. Crawlers use links to discover other pages paying close attention to new content and existing subject matter changes.

Google and other engines have given webmasters a granular choice when search bot crawl their sites. This feature is a significant advancement to the arcane policies of the past.

If you are setting up your google sitemap, there are a couple of conditions that need to be followed:

- The location of your sitemap must be in the root directory of your website. Crawl bots generally start at the root and move outwards. If there is a sitemap, you have made it easier for the bot.

- Secondly, each subsequent URL must be the same as the sitemap. If the sitemap has the HTTP: protocol, every URL must have the same HTTP: extension.

- Major search engines now allow multiple sitemap files in a single directory for ease of crawling. The maximum number of URLs allowed in a single sitemap file is 50,000. Webmasters can break that number down further and have multiple sitemaps to define the website structure better.

- Follow the sitemap formats from Sitemaps.org to establish the right schema for your site.

SEO and Your Sitemap

Every website needs a sitemap and the knowledge of how to create xml sitemaps, whether new with a single page or an eCommerce store with thousands of products. Websites benefit when search engines can easily find important pages, and when they were last updated.

A sitemap in your website’s structure tells the search engines that each included URL is a quality landing page.

SEO best practices are always in flux; what was excellent advice yesterday on how to create xml sitemap may have changed today. It is wise counsel to know the location of SEO best practices for each of the search engines your website is targeting.

Stay away from amplified opinions on blogs and forums. Concentrate on what is essential, like a google xml sitemap requirements. Take the time to disseminate the noise and form your best practice from the major engines.

You Might Also Like

Format of an XML Sitemap

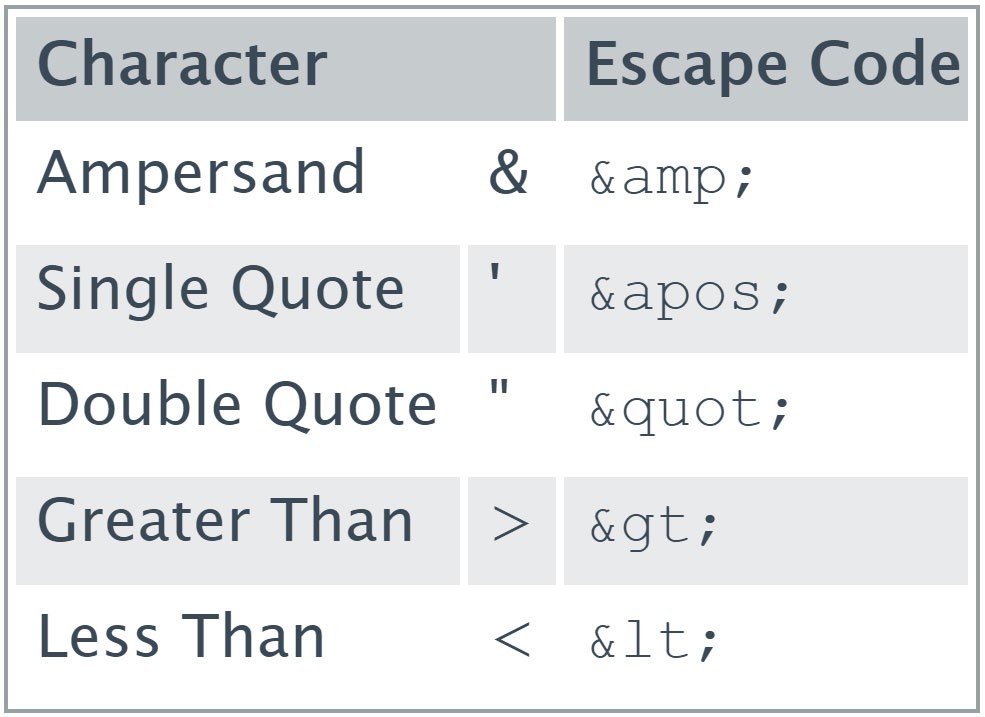

- org is the definitive source of what is sitemap xml protocol and how to structure a sitemap. Proper formatting of an XML sitemap consists of a series of descriptive tags. Each value must be entity-escaped, meaning the sitemap is UTF-8 encoded. For example:

(Image Credit: Sitemaps)

UTF-8 is short for Unicode Transformation Format-8 Bit. This designation represents a standard variable width, electronic communication character. Another formatting condition: each URL of the sitemap must be encoded for readability by the web-server.

The following are sitemap tag definitions; some are a few of the google xml sitemap requirements, while others are optional.

- <urlset> is a required tag. This standard references; what is sitemap xml protocol and describes the file.

- <url> is the parent tag for each entry. Each tag after this entry is a child tag.

- <loc> is another required tag. This feature references the URL and begins with a protocol such as HTTP or HTTPS, and it must end with a forward slash if the web-server requires it. This value must be less than 2,048 characters.

- <lastmod> is an optional tag that defines when the file was last modified. The tag must be in a W3C Datetime format. The tag is separate from the if-modified-since tag. Search engines may use information from alternative sources.

- <changefreq> is another optional tag which improves the searchability of the sitemap. This tag displays how frequently the page is likely to change.

Always, used to describe documents that will change each time they are accessed:

- Hourly

- Daily

- Weekly

- Monthly

- Yearly

- Never, archived URLs

Web crawlers may access a page more often than hourly and may crawl pages marked yearly more frequently. Pages marked with never may be crawled to handle unexpected changes.

- <priority> is a relative URL tag to other URLs on the site. Values range from 0.0 to 1.0, with the default being 0.5. The value does not affect how pages are compared to other sites. The tag displays the google xml sitemap requirements and which pages the webmaster deems most important for crawlers.

Assigned priorities are not likely to influence the search position. Webmasters can use this tag to increase the likelihood that the most important pages will be indexed.

Create a Sitemap

Creating a sitemap index may seem overwhelming at first if this is your first time. Sitemaps are made for search engine crawl bots, not humans. As we have stated, there are plenty of tools in modern SEO that create perfect sitemaps.

It is vital to understand the structure of XML to make sure the tools are doing their job.

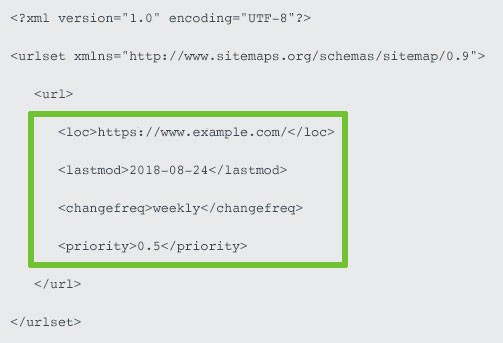

Let’s break down a simple sitemap:

- XML Declaration: this statement tells the search engine bot what they are reading; in most cases, it is an XML file type. Other declarations tell the bot; version info and encoding type. The sitemap must be UTF-8.

- URL Set: this section contains the URLs in the sitemap. URL Set informs the bots about which standard is used. The most common standard is 0.90, which is supported by Google, Microsoft, and Yahoo.

- URL: Webmasters must tell the bot of each URL nested in the <loc> tag. It is crucial to state; the URLs must be absolute, not relative, canonical URLs. The <loc> tag is the only required element at this stage.

After the webmaster declares the URL, they can use any of the optional tags from above to understand each entry further. Include only relevant SEO pages; you help the crawl bot work more intelligently, which in turn helps your sire reap the benefits of a good crawl session.

A crawling bot arrives at a website with predetermined parameters for crawling an xml sitemap example site. These parameters are usually based on the last results. Do not waste valuable crawl bot time by including less relevant website pages—only the best.

Test your site’s SEO and performance in 60 seconds!

Good website design is critical to visitor engagement and conversions, but a slow website or performance errors can make even the best designed website underperform. Diib is one of the best website performance and SEO monitoring tools in the world. Diib uses the power of big data to help you quickly and easily increase your traffic and rankings. As seen in Entrepreneur!

- Easy-to-use automated SEO tool

- Keyword and backlink monitoring + ideas

- Ensures speed, security, + Core Vitals tracking

- Intelligently suggests ideas to improve SEO

- Over 500,000 global members

- Built-in benchmarking and competitor analysis

Used by over 500k companies and organizations:

Syncs with

Pages NOT to include in your sitemap:

- Utility and archived pages

- Pages blocked by the robots.txt file and noindex pages

- Duplicated and paginated pages and posts

- Non-canonical pages

- Replies to comments and email URLs

- Re-direction, missing pages, and error pages

Be careful with an html sitemap generator. Some are unreliable and practice bad SEO by including non-canonical URLs and noindex pages.

Having low-quality pages in a sitemap has dire SEO consequences:

- The first and most important; they waste valuable crawl budget time. This time could be better spent fully exploring only the best pages and links from your site.

- Low-quality pages steal link authority from pages that can rank higher. For example, the aHrefs blog deleted a third of their insignificant posts and found that traffic increased.

- Users find a lower-level experience if directed to non-essential pages. Visitors are annoyed when they land on worthless pages of a website and will quickly move away. Keep only the best pages on a website.

Different Types of Sitemaps

There are now more than 140 search engines and directories across the world. At last count, Google has indexed nearly 4.5 billion web pages. As the internet grows, so does the number of sitemap types, html sitemap generator. Does the question become what is sitemap.xml in seo?

As the complexity and size of websites continue to grow, so will sitemap technologies.

XML Sitemap

XML Sitemap is the standard file for getting your site noticed on the internet. However, there are some limitations to the XML sitemap. No more than 50,000 URLs can be processed, and there is a size limit of 50 mb.

If your sitemap exceeds either of the two limits, they will need to be split into another sitemap file. Large sites can take a granular approach by creating multiple index files if needed.

From the Google Webmaster Blog, xml sitemap example of multiple index files:

- xml http://example.com/stores/store2_sitemapindex.xml http://example.com/stores/store3_sitemapindex.xml

Creating separate index files is ideal for a multi-site arrangement. Or stores that want to submit content at different times of the day. Webmasters question what is sitemap.xml in seo. The image below shows another example of XML Sitemap:

(Image Credit: Search Engine Journal)

XML Image Sitemap

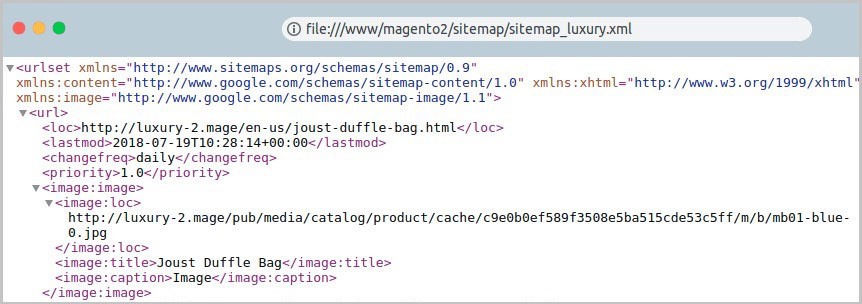

XML Image Sitemap is an excellent resource for sites with plenty of videos or images along with content.

Image sitemaps may be unnecessary because of modern-day SEO practices. Most websites have images embedded in their pages. Search engines crawl images along with any page content. Here is an example of an XML Image Sitemap:

(Image Credit: SwissUpLabs)

Use the markup; JSON-LD schema.org/ImageObject, giving the webmaster more customization options.

Image sitemaps take up too much crawl budgets for most websites. If you have a website where the images are a fundamental part of the site, take a hard look at the options. E-commerce and gaming sites may benefit from a sitemap image file.

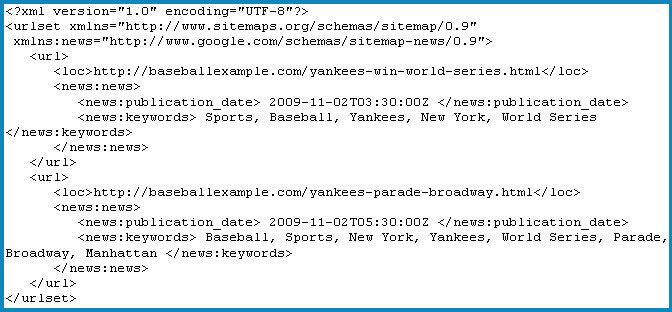

Video XML Files

Video XML files are similar to the image sitemap. If videos are critical to the success of your website, submit a video XML file for crawling. For instance:

(Image Credit: Moz)

Again, do not waste the valuable time sites have when a bot comes crawling.

Dynamic XML

Dynamic XML automatically updates sitemaps that otherwise would be outdated content. Static sitemaps become obsolete as soon as the content is changed or revised in any way. Static sitemaps do not take advantage of the lastmod tag.

Modern SEO best practices have designed Dynamic Sitemaps for ever-changing content. A webmaster’s server automatically submits a new sitemap whenever changes are made.

Any of these steps can help the webmaster build a Dynamic Sitemap File:

- Have your developer code a custom script; pointing out the task involved

- Use a sitemap generator tool

- The majority of CMS platforms offer plugins to generate a dynamic sitemap XML file.

HTML Sitemaps

HTML Sitemaps are old-school indexation files and should only be used if other XML files do not fit the application. HTML sitemaps were designed to assist human users to find content.

HTML sitemaps attest to the quality of a site’s link quality. The HTML sitemap should be considered carefully for any reason to exist. If webmasters have designed their sites with a firm linking policy and an XML sitemap; ask yourself; is an HTML sitemap needed?

In most instances; No.

We hope that you found this article useful.

If you want to know more interesting about your site health, get personal recommendations and alerts, scan your website by Diib. It only takes 60 seconds.

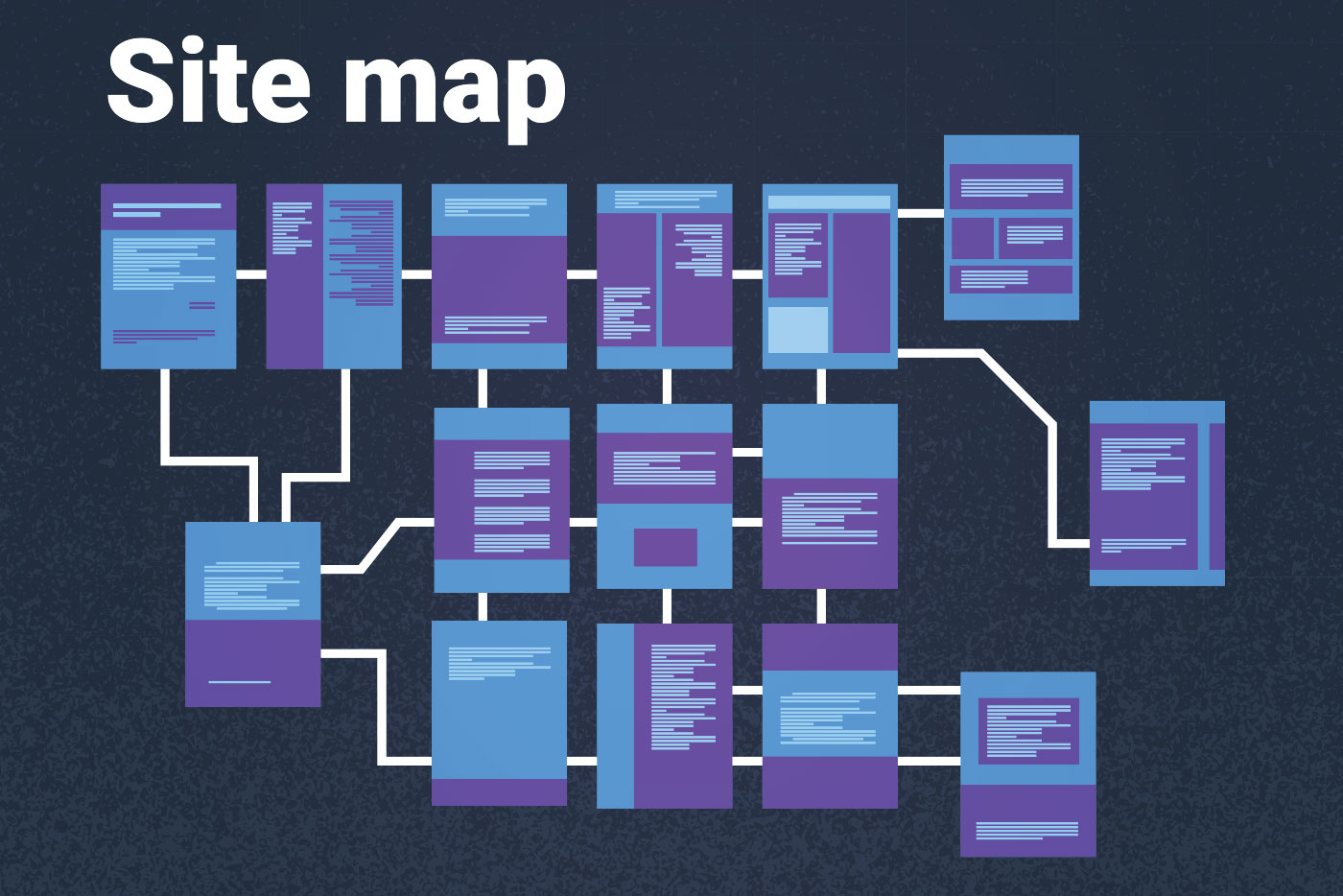

Google News Sitemaps

Google News Sitemaps are restricted to sites registered with the search engine. Restrictions are news articles published in the last two days up to a maximum of 1000 URLs.

Google News Sitemaps do not support image or video sitemaps.

The search engine recommends using schema.org to specify the attributes of a thumbnail image. For instance:

(Image Credit: G-Squared Interactive)

Mobile sitemaps

Mobile sitemaps are legacy code and available to webmasters but usually are never needed. Mobile XML files are for feature phone pages, not smartphones. These sitemaps have no benefit to webmasters unless the website has specific URLs for this platform type.

Optimizing SEO With Sitemaps

Now that the basics have been covered, it is time to see how sitemaps are invaluable to websites. Webmasters should not include every page of their website into a sitemap, only the relevant, SEO authoritative pages.

Five SEO Reasons to Create a Sitemap

- Sitemaps are free and very easy to create. As stated earlier, every CMS platform has several sitemap plugins and scripts. WordPress alone has dozens of different applications, from a single sitemap to comprehensive SEO services.

Sitemaps are invaluable SEO tools. They encourage every search engine to index more of your website’s content and index it correctly.

It is advisable to have a sitemap created as part of an overall effective SEO strategy. WordPress features; Yoast SEO and All in One plugins that offer useful functionality.

- Improved ranking. Images and videos can improve your site’s search ranking by providing additional information to the crawl bots.

Using a sitemap for videos hosted at your site means webmasters can include additional metadata for each video. The information can include locations, title, description, duration, view count, and categories. The same data can be included for each image embedded in your site.

- Crawling priority. High-value pages are given a crawling priority with a sitemap. If there is no roadmap to a website, crawl bots have no direction once they hit your site.

Controlling the crawling process should be a top priority for webmasters. Webmasters can create priories for each of their pages. For example, a homepage can have 100% priority, while low-level documents may have 60% priority. This flexibility is beneficial in defining the value of your site, page by page.

- Discover more pages. Valid sitemaps help crawl bots discover more pages, meaning more content becomes indexed.

Sitemaps do not guarantee higher search results, only that more of the website’s content is discovered.

Another essential feature, sitemaps guard against duplicate content. It is frustrating to publish an original piece of content later to find the same content on a competitor’s website.

If two identical pieces of content are found, engines try to keep the original and discard the duplicate. Search engines will crawl a site more often if a valid sitemap is used. They do not always get the right call on the original, but you do have the protection.

- Links. Search engines, mainly Google, may add additional site links to a website if it includes a sitemap. Google’s algorithm may add valuable links under the site’s organic listing, giving users a more complete picture of the query. This process is automated; however, the chances are better if a website has a sitemap.

- Errors are minimized. Crawling errors are highlighted; if you give Google the location of a valid sitemap, the search engine returns the favor by giving webmasters information on crawling. Use Google Search Console and Bing Webmaster to submit any sitemaps.

Bots crawl the site and report back their findings. This resource is invaluable to SEO best practices.

Diib®: Get the Latest Metrics on Your XML Sitemap

SEO starts at the granular level of a website in the root directory. Webmasters should understand the hierarchy of each site and page and build them with the search engines in mind. Diib Digital offers the most comprehensive and up-to-date metrics on your XML sitemap health and will alert you to possible problems long before they severely impact your ranking or traffic. Here are some of the features of our User Dashboard you’ll love:

- Sitemap tracking and health

- 24/7 Domain monitoring

- Bounce rate repair

- Post performance

- Broken pages where you have backlinks (404 checker)

- Keyword, backlink, and indexing monitoring and tracking tools

- User experience and mobile speed optimization

Get a free 60 second site scan or simply call 800-303-3510 to speak to one of our growth experts.

FAQ’s

This is really just a list of URLs for your website. It allows you, as the webmaster, to include other relevant information about each URL, such as: how often it changes, its relation to other URLs, its importance in the structure of your site, and when it was last updated.

The main difference between these two sitemaps is that XML sitemaps are created for search engines, and HTML sitemaps are written for humans trying to find something on your page.

The fastest and easiest way to get an XML sitemap is on SEO Site Checkup’s sitemap tool. You simply put in your URL and see if they find your sitemap.

A sitemap should include the pages and posts that you want included on your website.

Once in the life of the website IF there are absolutely no changes to content. When you have changes in content, 1-2 times per month will help get it indexed faster.

One thought on “What is an XML Sitemap and How to Create One?”